Synopsis: High-stakes testing data is stripped of context and deployed to denounce public education—especially urban schools serving Black students and students with disabilities—while doing little to improve learning.

The Ritual

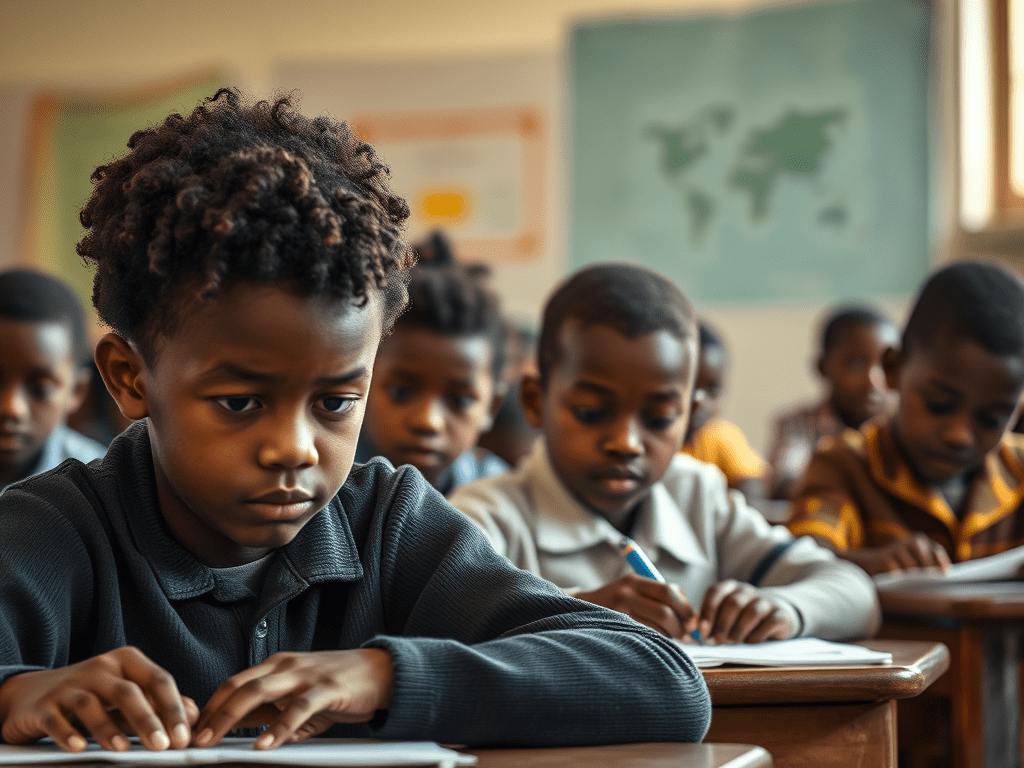

Every Spring, a familiar ritual unfolds. Test scores are released, spreadsheets are circulated, headlines are written, and politicians step to microphones with grave expressions. They speak of failure. Of decline. Of urgency. And almost without exception, they point toward the same targets: public schools in urban communities—schools that educate Black children, disabled children, multilingual learners, and students navigating poverty alongside adolescence.

What rarely accompanies these pronouncements is curiosity. Or humility. Or a genuine plan to improve student outcomes.

Instead, high-stakes testing data—thin, partial, and profoundly context-dependent—is wielded as proof of institutional rot. Scores are abstracted from the lives that produced them and repurposed as political talking points, stripped of history, stripped of humanity, and stripped of responsibility. The data becomes less a diagnostic tool than a cudgel: a way to denounce public education without reckoning with the conditions under which it operates.

Misuse of Data

As a Black woman educator, I have watched this cycle repeat for decades. I have watched policymakers invoke “the numbers” while ignoring overcrowded classrooms, underfunded special education services, chronic staff vacancies, and the compounding effects of segregation and disinvestment. I have watched them cite test scores to justify closures, takeovers, and privatization—interventions that disrupt communities and rarely deliver the promised academic gains. What I have not watched them do, with any consistency, is stay long enough to fix what the data actually reveals.

High-stakes tests were never designed to carry the moral weight we assign them. They offer a narrow snapshot of performance on a particular day, filtered through language demands, cultural assumptions, and accommodations that too often fall short. For students receiving special education services, these tests frequently measure compliance more than cognition. For Black students navigating schools shaped by inequitable funding formulas and policy churn, they often reflect systemic neglect more than individual potential.

If we are serious about improving student outcomes, we must stop pretending that test scores alone can tell us who or what is failing. We must stop using data as a verdict and start using it as a starting point. And we must reckon with the racial and political dynamics that make certain schools perpetual symbols of crisis, regardless of the work happening inside them.

Yes. Politicians speak as if the scores tell a complete story. They speak as if numbers alone can diagnose complex educational ecosystems. They speak as if the problem is effort or competence, rather than structure or will.

This misuse of data is not accidental. Test scores provide the appearance of objectivity while allowing decision-makers to avoid harder conversations about segregation, disability rights, labor conditions, housing policy, and healthcare access. It is far easier to condemn a school than to confront the political choices that shaped it. It is far easier to point to a percentile than to explain why students lack counselors, social workers, nurses, or stable instructional materials. The data becomes a shield—one that protects those in power from accountability while placing the burden of “failure” squarely on children and educators.

Mindful Use of Data

What is lost in this discourse is the purpose of data itself. Data should illuminate patterns, guide resources, and inform improvement. It should help us ask better questions: Where are students being underserved? What supports are missing? Which interventions are working, and for whom? When data is used to punish rather than to learn, it ceases to be a tool for progress and becomes an instrument of harm.

Consider Malik—not his real name—an eighth grader with an individualized education program for reading. Malik arrived late most mornings because he was responsible for getting his younger sister to school. On test day, his accommodation was technically available, but the proctor assigned to read aloud rotated among three rooms. Malik worked silently, ran out of time, and guessed on the final section. His score later appeared in a public dataset, one more mark in a column used to describe his school as “persistently failing.” No column captured the missed services, the staff shortages, or the fact that Malik’s reading growth across the year—measured by classroom-based assessments—was real and meaningful. The number traveled; the context did not.

In schools across urban America, many educators are already doing the work policymakers claim to want. They are building literacy through culturally responsive curricula, expanding inclusive practices for students with disabilities, and strengthening relationships that sustain learning beyond a test window. Research consistently shows that these approaches—stable staffing, aligned instruction, meaningful family engagement—drive long-term gains. But these successes rarely make headlines, because they do not serve a political agenda.

This framing also distorts what improvement actually looks like. And again, decades of research point toward the same drivers of sustained gains: stable staffing; aligned, culturally responsive curricula; inclusive practices that meet students where they are; and deep partnerships with families. None of these can be captured fully by a single test score. All of them require time, coherence, and investment—precisely what political cycles and sound-bite governance discourage.

Public education does not need more denunciations. It needs sustained investment, policy coherence, and leaders willing to tell the truth: that the data politicians cite so confidently often indicts the system they govern more than the schools they criticize. The numbers are not a weapon. They are a mirror. And what they reflect depends on whether we are brave enough to look beyond them.

Ethical Use of Data

If we are serious about outcomes, we would use test data the way it was intended: to surface patterns, guide resources, and prompt inquiry. We would ask where students are being underserved and why. We would pair results with investments—smaller class sizes, fully staffed special education teams, wraparound services, and sustained professional learning. We would measure what we value, not simply value what is easiest to measure.

We would also tell the truth about race and power. Urban schools that serve Black students and students with disabilities are not perennial crises by accident. They are shaped by policy choices—zoning, funding formulas, staffing pipelines—that advantage some communities while extracting from others. When politicians denounce these schools using decontextualized data, they are not naming failure; they are deflecting responsibility.

Final Thoughts

To be clear: data matters. Accountability matters. Families deserve transparency about how schools are serving their children. But accountability without context is not accountability—it is theater. And data without responsibility does not drive improvement; it rationalizes retreat.

When such scores are elevated above all else, data stops informing improvement and starts justifying punishment. Schools are labeled, communities are stigmatized, and teachers are blamed for outcomes they did not engineer. The message to students—especially Black students and students with disabilities—is unmistakable: your worth is conditional, and your schools are suspect.

Public education does not need more verdicts delivered from podiums. It needs leaders willing to read the data with care, invest with discipline, and remain accountable for the systems they oversee. The numbers can help—but only if we refuse to let them be weaponized against the very children they are meant to serve.

When test scores are used to score political points instead of build better schools, the data doesn’t expose failure—it documents neglect.

Author Bio

Dr. Hope O’Neil is an African American educator with over 30 years of teaching and leading experience in America’s urban public schools, working at the intersection of literacy, leadership, culturally responsive instruction, and school improvement. Hope has advised districts on inclusive practice and accountability and writes about education policy, race, and the limits of data divorced from context. She helps schools and districts create the conditions that allow students and teachers to thrive. Hope lives and works in the United States.